Keras 使用 Lambda层详解

我就废话不多说了,大家还是直接看代码吧!

from tensorflow.python.keras.models import Sequential, Model from tensorflow.python.keras.layers import Dense, Flatten, Conv2D, MaxPool2D, Dropout, Conv2DTranspose, Lambda, Input, Reshape, Add, Multiply from tensorflow.python.keras.optimizers import Adam def deconv(x): height = x.get_shape()[1].value width = x.get_shape()[2].value new_height = height*2 new_width = width*2 x_resized = tf.image.resize_images(x, [new_height, new_width], tf.image.ResizeMethod.NEAREST_NEIGHBOR) return x_resized def Generator(scope='generator'): imgs_noise = Input(shape=inputs_shape) x = Conv2D(filters=32, kernel_size=(9,9), strides=(1,1), padding='same', activation='relu')(imgs_noise) x = Conv2D(filters=64, kernel_size=(3,3), strides=(2,2), padding='same', activation='relu')(x) x = Conv2D(filters=128, kernel_size=(3,3), strides=(2,2), padding='same', activation='relu')(x) x1 = Conv2D(filters=128, kernel_size=(3,3), strides=(1,1), padding='same', activation='relu')(x) x1 = Conv2D(filters=128, kernel_size=(3,3), strides=(1,1), padding='same', activation='relu')(x1) x2 = Add()([x1, x]) x3 = Conv2D(filters=128, kernel_size=(3,3), strides=(1,1), padding='same', activation='relu')(x2) x3 = Conv2D(filters=128, kernel_size=(3,3), strides=(1,1), padding='same', activation='relu')(x3) x4 = Add()([x3, x2]) x5 = Conv2D(filters=128, kernel_size=(3,3), strides=(1,1), padding='same', activation='relu')(x4) x5 = Conv2D(filters=128, kernel_size=(3,3), strides=(1,1), padding='same', activation='relu')(x5) x6 = Add()([x5, x4]) x = MaxPool2D(pool_size=(2,2))(x6) x = Lambda(deconv)(x) x = Conv2D(filters=64, kernel_size=(3, 3), strides=(1,1), padding='same',activation='relu')(x) x = Lambda(deconv)(x) x = Conv2D(filters=32, kernel_size=(3, 3), strides=(1,1), padding='same',activation='relu')(x) x = Lambda(deconv)(x) x = Conv2D(filters=3, kernel_size=(3, 3), strides=(1, 1), padding='same',activation='tanh')(x) x = Lambda(lambda x: x+1)(x) y = Lambda(lambda x: x*127.5)(x) model = Model(inputs=imgs_noise, outputs=y) model.summary() return model my_generator = Generator() my_generator.compile(loss='binary_crossentropy', optimizer=Adam(0.7, decay=1e-3), metrics=['accuracy'])

补充知识:含有Lambda自定义层keras模型,保存遇到的问题及解决方案

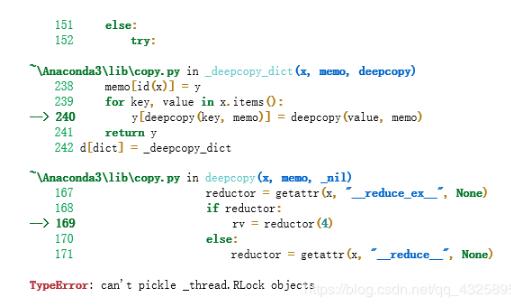

一,许多应用,keras含有的层已经不能满足要求,需要透过Lambda自定义层来实现一些layer,这个情况下,只能保存模型的权重,无法使用model.save来保存模型。保存时会报

TypeError: can't pickle _thread.RLock objects

二,解决方案,为了便于后续的部署,可以转成tensorflow的PB进行部署。

from keras.models import load_model

import tensorflow as tf

import os, sys

from keras import backend as K

from tensorflow.python.framework import graph_util, graph_io

def h5_to_pb(h5_weight_path, output_dir, out_prefix="output_", log_tensorboard=True):

if not os.path.exists(output_dir):

os.mkdir(output_dir)

h5_model = build_model()

h5_model.load_weights(h5_weight_path)

out_nodes = []

for i in range(len(h5_model.outputs)):

out_nodes.append(out_prefix + str(i + 1))

tf.identity(h5_model.output[i], out_prefix + str(i + 1))

model_name = os.path.splitext(os.path.split(h5_weight_path)[-1])[0] + '.pb'

sess = K.get_session()

init_graph = sess.graph.as_graph_def()

main_graph = graph_util.convert_variables_to_constants(sess, init_graph, out_nodes)

graph_io.write_graph(main_graph, output_dir, name=model_name, as_text=False)

if log_tensorboard:

from tensorflow.python.tools import import_pb_to_tensorboard

import_pb_to_tensorboard.import_to_tensorboard(os.path.join(output_dir, model_name), output_dir)

def build_model():

inputs = Input(shape=(784,), name='input_img')

x = Dense(64, activation='relu')(inputs)

x = Dense(64, activation='relu')(x)

y = Dense(10, activation='softmax')(x)

h5_model = Model(inputs=inputs, outputs=y)

return h5_model

if __name__ == '__main__':

if len(sys.argv) == 3:

# usage: python3 h5_to_pb.py h5_weight_path output_dir

h5_to_pb(h5_weight_path=sys.argv[1], output_dir=sys.argv[2])

以上这篇Keras 使用 Lambda层详解就是小编分享给大家的全部内容了,希望能给大家一个参考,也希望大家多多支持我们。

赞 (0)